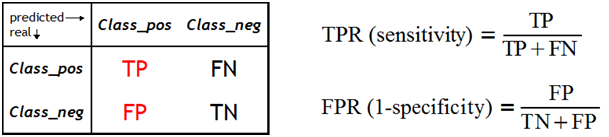

The ROC curve is one of the methods for visualizing classification quality, which shows the dependency between TPR (True Positive Rate) and FPR (False Positive Rate).

In the previous parts of our tutorial we discussed:

In this fourth part of the tutorial we will discuss the ROC curve.

The ROC curve is one of the methods for visualizing classification quality, which shows the dependency between TPR (True Positive Rate) and FPR (False Positive Rate).

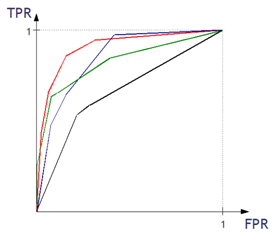

The more convex the curve, the better the classifier. In the example below, the “green” classifier is better in area 1, and the “red” classifier is better in area 2.

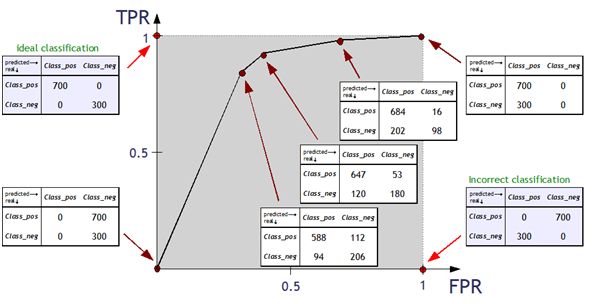

Example:

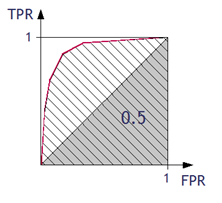

The quality of classification can be determined using the ROC curve by calculating the:

The higher the value of AUC coefficient, the better. AUC = 1 means a perfect classifier, AUC = 0.5 is obtained for purely random classifiers. AUC < 0.5 means the classifier performs worse than a random one.

The higher the value of GC, the better. GC = 1 denotes a perfect classifier, GC = 0 denotes a purely random one.

The last part of our tutorial will be dedicated to LIFT curve.